Complex data¶

When your data is very complex - high embedding dimension, internal structures, ... - even small data sizes become difficult to handle.

If you know the internal structures before hand, this helps immensely; we have methods that make image or sound analysis feasible in spite of a high embedding dimension.

Far more difficult is when there is unknown internal structure. One area where this happens a lot is in genomics.

Intrinsic and Ambient Dimension¶

The problem with most classical analysis methods is that their complexity tends to depend on ambient dimension - on the number of features in the data set.

In most cases of interest, the data does not fill out the entire ambient space: data has a relatively high co-dimension.

The lower intrinsic dimension of data is what drives successful modeling.

From Ambient to Intrinsic¶

One approach is to use dimensionality reduction methods to lower the dimension:

- PCA: find linear map that concentrates variances

- Manifold learning: find a non-linear map under a manifold assumption.

- Graph / Complex representations: build a combinatorial model of the data.

When the data is linear enough, PCA can perform very well. Alternative approaches excel when the internal structure of the data is more complicated.

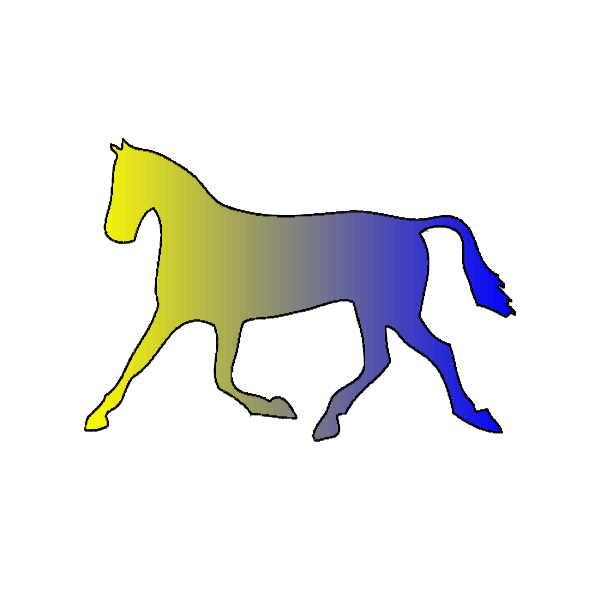

Why Non-linear?¶

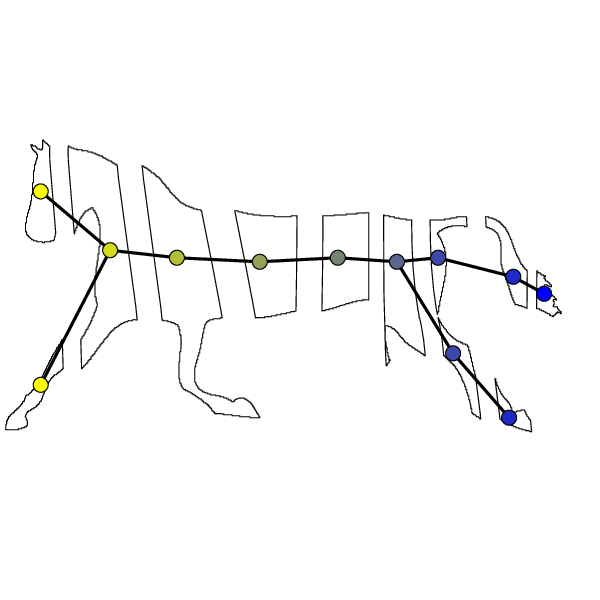

fig1

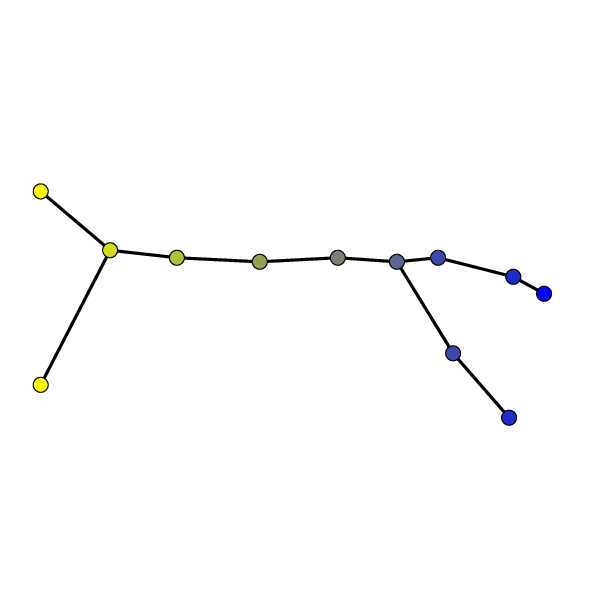

Why Non-linear?¶

fig2

Manifold Learning Methods¶

- MDS - Multidimensional Scaling: optimize an embedding to reduce the difference between original distances and embedding distances

- Isomap: use nearest neighbors to build a distance graph, traverse the graph to build distances between points, then use MDS

- Locally Linear Embedding (LLE): find nearest neighbors of each data point, use small neighborhood to build local linear regressions and then glue these to form a global structure

- Spectral Embedding: use Eigenvectors of the Graph Laplacian to create new coordinates

Topological Data Analysis¶

The field of Topological Data Analysis starts with a few observations and builds a new paradigm of data analysis techniques, designed to deal with highly complex datasets.

- Data Has Shape

- Shape Matters

Once the shape of data is identified as important, several features of topology emerge as useful:

- Coordinate Independence

- Deformation Resilience

- Compressed Representation

There are two main current directions of TDA:

- Mapper - constructing intrinsic models of data

- Persistent Homology - extending clustering to topological invariants that measure internal structures of clusters

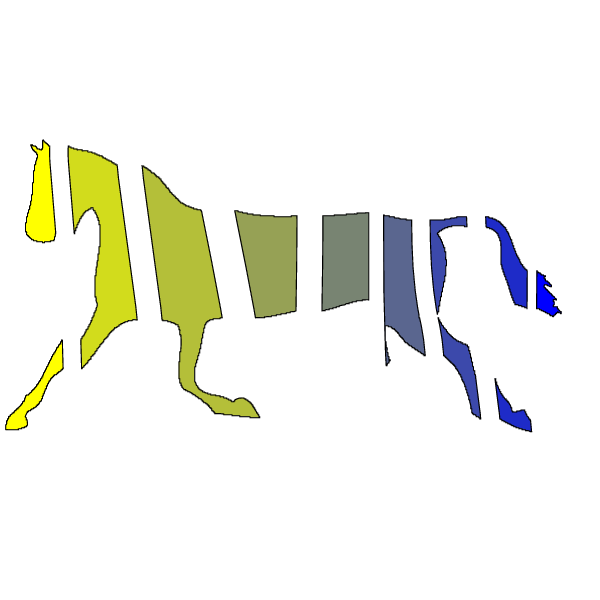

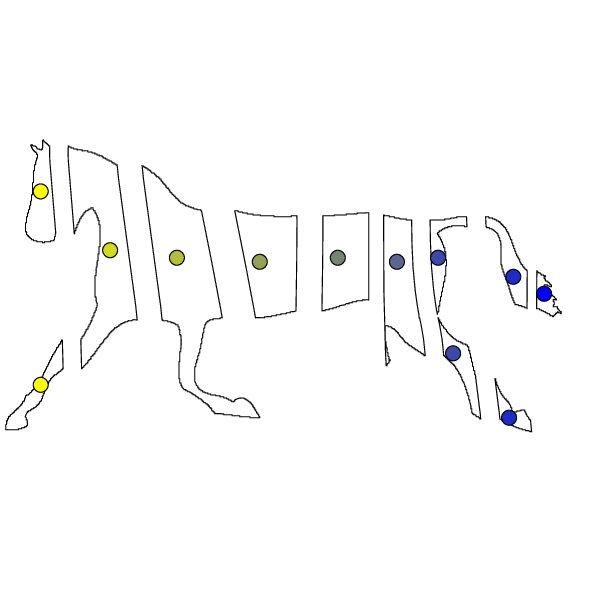

Mapper: graph / simplicial complex representations¶

Given: arbitrary dataset $X$, function $X\to\mathbb{R}^d$.

The role of the filter function is to determine what differences in the data matter to the user: what distinctions need to be preserved in the new representation.

Mapper then:

- Bins $X$ into overlapping bins according to the filter function values

- Clusters each bin separately

- Connects overlapping clusters from adjacent bins to create a graph / simplicial complex representing the original data

The Nerve lemma from algebraic topology tells us that if we are sufficiently lucky, the result is structurally equivalent to the original data.